Ethical Marketing Automation: How Much AI Is Too Much?

Marketing automation risks overstepping privacy boundaries, desensitizing audiences, and generating compliance risks. With this, effective marketing balances AI efficiency with ethical safeguards, transparency, and respect for user consent.

TL;DR

- The smartest teams use AI in marketing to personalize with consent, not surveillance.

- Treat data like a privilege: build risk management and compliance into workflows from day one.

- The real question isn’t AI usage, but how much AI your customers will welcome before trust erodes.

- AI automation works best with clear labeling, human oversight, and visible value exchange.

- Create explicit guidelines for AI usage, and you’ll capture ROI without tripping regulatory tripwires through strong risk management and compliance.

If you lead growth, you’ve probably felt the tension. Customers love helpful personalization and faster service, but they’re increasingly wary of opaque data practices and black-box models deciding what they see.

The sweet spot exists, and it’s measurable. When teams deploy AI in marketing with the right boundaries, they see higher conversion, stronger loyalty, and fewer regulatory headaches. When they push too far, they trigger backlash that’s expensive to unwind.

Let’s answer the question head-on: how much AI is “too much”? The short version: AI usage is defined by how comfortable your audience is with it, and no more. That’s not a dodge; it’s a strategy.

You can quantify comfort with consent rates, opt-outs, trust surveys, and complaint patterns. Then you can scale AI automation to your own risk tolerance and your customers’ preferences, with a governance layer designed to prevent overreach, anchored in risk management and compliance. In practice, that’s how enterprise marketers balance ambition with accountability when using AI in marketing.

Below, we show how the data, the regulations, and the revenue case all point to the same conclusion: AI usage must pair precision with transparency, and personalization has to feel earned, not extracted.

Why does ethical AI automation matter right now?

Because consumer trust and revenue are linked, and both are on the line. Globally, 64% of people prefer brands that tailor experiences, but only about one-third trust companies to use their data responsibly, according to

Qualtrics XM Institute.

People will share basic details like purchase history (45% are comfortable), but they balk at sensitive information (only 12–17% are OK with financial or social-media data). In the U.S., a growing majority worries about unethical AI usage.Salesforce highlights that many consumers have concerns about how much AI is used in marketing, and

Pew Research Centerreports that roughly 70% have little or no trust that companies will deploy AI automation responsibly.

The message is clear: AI automation is desired, but only on transparent terms. That’s where good governance makes the difference. Teams that normalize consent, explain their data logic, and allow control find that AI in marketing becomes an asset to customer relationships, not a liability. Meanwhile, pressure from regulators is intensifying, making proactive risk management and compliance a competitive advantage rather than a box-check.

How do consumers draw the line between helpful and creepy?

In short, relevance without context feels invasive. Surveys cited by

Forbesshow only 14% of people find hyper-targeted ads helpful, while 40% call them “unnerving” and 27% see them as a privacy violation.

Nearly one-third say they’d abandon a brand that targets them too much. That’s your practical boundary for how much AI you should lean on for targeting.

What pushes things into the “creepy” zone? Using inferred sensitive traits without consent (e.g., health or finances), remarketing based on off-platform behaviors users didn’t expect you to track, or automating messages that sound overly intimate.

The antidote is simple but non-negotiable: tell people what you’re doing and why, let them say no, and avoid sensitive signals unless users explicitly opt in. Keep it clear and value-forward.

Why does transparency lift revenue?

Because customers support brands that respect them. Research summarized by

CMSWirenotes that consumers are more likely to support companies they view as transparent with AI usage (reflecting findings from McKinsey and Cisco).

Cisco’s benchmark data further shows 92% of consumers want companies to do more to protect privacy, and 61% have abandoned organizations over poor data practices. This isn’t just defensive risk control; it’s a growth strategy. That’s why risk management and compliance increasingly sit at the center of high-performing AI marketing teams. Teams that invest in preference centers, granular opt-in/out controls, and clear disclosures find that the trust dividend shows up in acquisition, retention, and pricing power.

The same math holds in performance. Marketers reported that AI usage exceeded ROI expectations in 2024 and positively impacted revenue, according to

MarTech Vibe. HubSpot’s data shows companies increased revenue with AI-enabled campaigns, and industry analysts project that a large share of B2B buying interactions will be automated in the coming years.

A large field study of e-commerce interactions suggests AI automation can significantly increase ROAS and lift conversions, while also raising acquisition costs, underscoring that trust is a top predictor of conversion. Net-net: you can scale AI in marketing for real gains, but returns are maximized only when customers feel in control.

How do you decide how much AI your audience will accept?

Start by answering in one sentence: You should deploy only the level of AI automation your customers have consented to and that your team can explain and govern. Then, validate that sentence with data. At scale, automation decisions must be proportional to your risk management and compliance maturity.

1. Map sensitivity by use case:

- Low-sensitivity use cases (e.g., product recommendations based on on-site behavior) are generally safer than high-sensitivity ones (e.g., inferring life events from third-party data).

- Use a simple green/yellow/red classification based on sensitivity and user expectations. Green zones are “always on.” Yellow requires transparent notices and opt-ins. Red needs explicit consent and executive review, or should be avoided.

2. Instrument comfort metrics:

- Track opt-in rates, opt-out rates, complaint volumes, and “creepy” feedback keywords in support tickets.

- Run quarterly trust pulse surveys: “Rate our personalization as helpful vs. intrusive.” If the “intrusive” score rises as you increase AI in marketing, you’ve crossed the line.

3. Test, don’t assume:

- Pilot in small cohorts. Compare against a control. Look for revenue lift without upticks in unsubscribes or negative sentiment.

- If you’re scaling a new tactic, double-gate it: a business case review (ROI, KPIs, confidence intervals) and a customer trust review (disclosures, consent path, harms assessment).

4. Tie spend to governance maturity:

- The more automated a tactic, the stronger the audit trail, labeling, and human oversight you need.

- If you can’t explain a model’s decisions or trace its inputs, don’t let it touch paid media or lifecycle messaging at scale.

| Use Case | Sensitivity Level | Disclosure & Consent |

|---|---|---|

| On-site product recommendations | Green | Brief “why you’re seeing this” message; honor opt-outs |

| Send-time optimization | Green | Covered in privacy notice; optional toggle in preference center |

| Third-party enrichment to infer life events | Red | Explicit opt-in; exec review; consider avoidance |

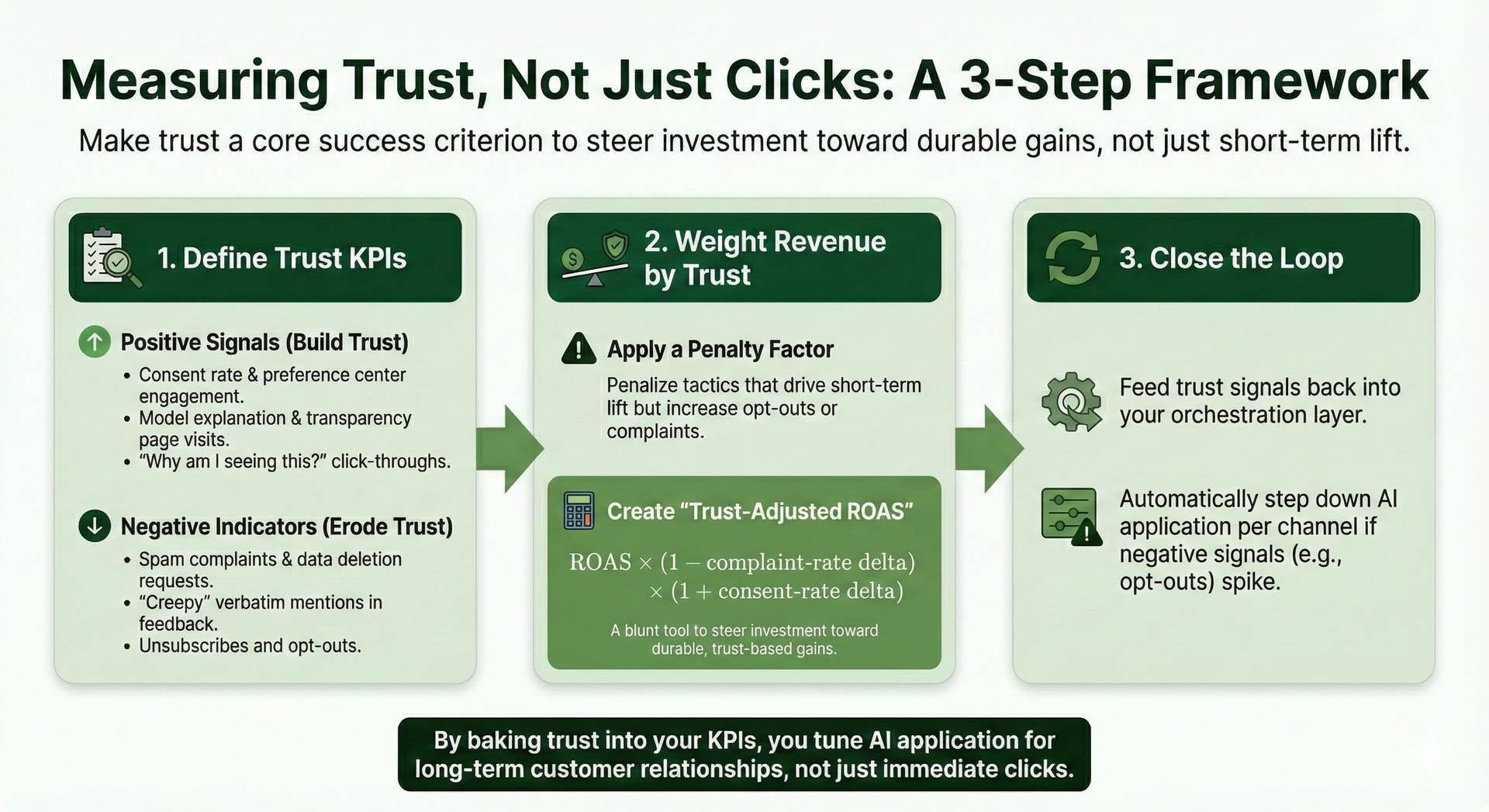

How should teams measure trust and not just clicks?

Answer: bake trust into your KPIs, not as a side metric but as a core success criterion. Then use it to tune how much AI in marketing you apply per channel.

- Define trust KPIs

- Consent rate, preference center engagement, model explanation views, transparency page visits, “Why am I seeing this?” click-through.

- Negative indicators: spam complaints, “creepy” verbatim mentions, unsubscribes, and data deletion requests.

- Weight revenue by trust

- Apply a penalty factor if a tactic drives short-term lift but increases opt-outs or complaints.

- Create a “Trust-Adjusted ROAS” score: ROAS × (1 − complaint-rate delta) × (1 + consent-rate delta). It’s a blunt tool, but it steers investment toward durable gains.

- Close the loop

- Feed trust signals back into your orchestration layer. For example, if a segment’s opt-outs spike, automatically step down personalization depth and switch to generic creative until sentiment normalizes.

What guardrails keep AI automation compliant and human?

The fastest way to align AI usage with regulators and users is to set four hard rules, supported by operational risk management and compliance: explicit consent, visible transparency, human oversight, and data minimization. These should govern AI in marketing as a matter of routine, not exception.

1. Consent as a default:

- Offer granular opt-ins (e.g., “Use my purchase history to tailor emails” separate from “Use my social posts to inform offers”).

- Explain the value exchange in plain language; research from Qualtrics shows most people aren’t against AI usage, they just want a say in how it happens.

2. Transparency by design:

- Label automated content, particularly in ads. The FTC has warned that AI usage in marketing must be clearly disclosed, no masquerading bots as organic human posts.

- Disclose AI automation engines in your terms and on your “About Our Data” page.

3. Human-in-the-loop:

- Require human review of high-impact campaigns, audiences, and automated decisions.

- Run periodic bias and privacy audits. NIST’s AI Risk Management Framework is an excellent guide for operationalizing reviews across mapping, measurement, and governance.

4. Data minimization:

- Collect only what you need for a defined benefit. Prohibit sensitive inferences without explicit consent.

- Set AI usage limits. Establish deletion policies tied to user inactivity and withdrawal of consent.

5. Clear internal accountability:

- Define who owns model performance, ethics review, and approvals for higher-risk experiments.

- Train your marketers, not just your data scientists. Everyone who touches AI automation should understand the playbook and escalation path.

How do you operationalize risk management and compliance without slowing growth?

You make it programmatic, turning risk management and compliance into a repeatable growth enabler. Use a framework, codify approvals, and automate the parts that don’t require judgment, so your team can confidently scale AI in marketing.

1. Build on standards:

- Implement the NIST AI Risk Management Framework as your backbone for identifying, measuring, and governing risk across systems and use cases.

- Align with regional laws, including the GDPR and the CCPA, on data rights; follow the FTC’s Endorsement Guides and its guidance on generative disclosures; and monitor prohibitions on harmful manipulation and exploitative targeting.

2. Strengthen endorsements and disclosures:

- Disclose paid relationships and clearly label synthetic media in ads. The FTC has made clear that misleading AI content and undisclosed endorsements won’t be tolerated.

3. Automate the guardrails:

- Add consent status checks to your audience builder: no consent, no AI usage.

- Auto-flag segments that rely on sensitive signals for manual review.

- Require a “model card” for each production model: purpose, training data sources, evaluation metrics, known limitations, and explainability notes.

4. Monitor the environment:

- Privacy enforcement is rising fast. Keep a living register of applicable laws and rulings, with a quarterly review cycle.

5. Document everything:

- Store key decisions, approvals, and test results in a governance system of record. If regulators come calling, or a customer complains, you can show your work.

Where does AI deliver ROI without risking trust?

Focus on use cases with high perceived value and low perceived intrusiveness. Start here, then expand gradually, especially if you’re evaluating the best AI marketing automation tools to orchestrate workflows across channels.

- Product and content recommendations based on on-site behavior: Users expect a site to remember their browsing. Make it better with clear “why you’re seeing this” messaging.

- Send-time optimization and frequency capping: Reduce noise. Let models help decide when and how often to send, then cap frequency to respect attention.

- Creative drafting with human editing: Use models to draft variations; keep humans to ensure tone, accuracy, and brand safety, a staple of responsible AI in marketing.

- Predictive lifecycle triggers that exclude sensitive attributes: Base reactivation or upsell on first-party interactions rather than third-party enrichment.

- Customer service augmentation: Triage and summarize cases to speed resolution, while ensuring an easy handoff to a human.

| Channel | Trust-Friendly Tactics | Common Pitfalls |

|---|---|---|

| Send-time optimization; preference center-powered segments | Over-personalized subject lines using sensitive inferences | |

| Ads | Contextual targeting: modeled lookalikes from first-party data | Retargeting based on off-platform tracking without clear consent |

| Web | Explainable recommendations; “Why this?” tooltips | Hidden personalization with no disclosure path |

| Social | Labeled synthetic media; moderated AI responses with human review | Undisclosed AI influencers or testimonials |

How do you choose privacy-first tools your legal team will love?

Use a buyer’s checklist that prioritizes control and auditability. And when you evaluate vendors, ask pointed questions that map to your governance model. This is where the best AI tools for marketing that protect user privacy truly stand out, and where the best AI marketing automation tools can streamline compliance as you scale AI in marketing.

1. Must-have capabilities:

- Consent-aware orchestration: the platform should automatically respect opt-ins across channels, table stakes for the best AI tools for marketing that protect user privacy.

- Data minimization controls: disable ingestion of sensitive fields you don’t need, a hallmark of the best AI marketing automation tools.

- Model transparency: documentation and dashboards that let you review inputs, outputs, and performance.

- Content labeling: a native way to disclose when creative or copy is machine-generated.

- Regional compliance: support for user rights requests and audit logging.

2. Ask vendors for proof:

- “Show me your model cards and privacy impact assessments,” a must for the AI automation that protects user privacy.

- “Demonstrate how opt-out propagates through all downstream processes.”

- “Explain how you prevent sensitive inferences” in both ads and social, key to finding the top AI tools for social media marketing with privacy safeguards.

3. Search for the right fit:

- Explore the best AI tools for marketing that protect user privacy; prioritize platforms that include explainability and consent-aware workflows out of the box.

- Shortlist the top AI tools for social media marketing with privacy safeguards, especially those with built-in UTM governance, comment moderation controls, and disclosure features.

- Favor the best AI marketing automation tools that offer granular role permissions and comprehensive audit logs.

Want a grounded recommendation tailored to your stack and compliance needs? The BusySeed team can help you build a shortlist and rollout plan. Start here: BusySeed.

How do you pilot ethically in 90 days?

Aim for a tightly scoped, well-governed pilot that proves lift and builds internal confidence. This is a practical on-ramp to AI in marketing.

1. Weeks 1–2: Define the guardrails:

- Draft a one-page policy that sets your stance on consent, disclosures, human review, and sensitive data. Approve it with Legal.

- Create a risk register for the pilot that includes potential harms, likelihoods, mitigations, and an escalation path.

2. Weeks 3–4: Stand up the data foundation:

- Clean first-party data, implement preference center updates, and ensure consent flags flow into your CDP and ad platforms.

- Add clear disclosure language to your privacy pages and relevant touchpoints.

3. Weeks 5–6: Build and test:

- Launch two to three low-risk use cases (e.g., product recommendations, send-time optimization).

- Prepare human-reviewed creative variations and clearly label any generative components.

4. Weeks 7–8: Measure and learn:

- Track performance, trust KPIs, and any complaints in real time. If negative sentiment rises, step down personalization depth.

- Run fairness checks on the modeled segments to ensure there is no unintended bias.

5. Weeks 9–10: Decide on scale:

- Present Trust-Adjusted ROAS and compliance findings to stakeholders.

- If greenlit, document a scale plan with fresh approvals, updated disclosures, and training for all practitioners.

6. Weeks 11–12: Institutionalize:

- Write a post-mortem. Update your playbook. Make the model card and DPIA part of your standard go-live checklist.

- Train teams on your process, then select the next wave of use cases.

What should you stop doing immediately?

- Inferring or buying sensitive attributes without explicit consent.

- Serving synthetic testimonials or AI personas that appear to be real people without clear disclosures invites regulatory action.

- Targeting vulnerable populations with manipulative, creative, or pressure tactics.

- Running black-box models in paid media without human review and a clear audit trail.

- Ignoring signals from your audience about how much AI in marketing they find acceptable, opt-outs, rising complaints, and “creepy” feedback are telling you to slow down.

How does the regulatory climate change your roadmap?

Regulators are publishing sharper guidance and stepping up enforcement. The FTC has warned against deceptive generative content and misleading endorsements, stressing that companies must label AI outputs and avoid manipulative design.

The EU AI Act prohibits harmful manipulation and exploitative targeting, especially of vulnerable groups. U.S. privacy enforcement continues to expand at the state level, and data protection authorities globally are increasing scrutiny. The direction of travel is undeniable: build compliance into your architecture rather than retrofitting it later.

This is where disciplined risk management and compliance, drawing on NIST’s AI guidance and clear approvals, helps teams move faster with less risk. Get your baseline right, then scale with confidence using the best AI automation practices.

BusySeed’s POV: Practical, privacy-first momentum

BusySeed builds programs where customers feel taken care of, not tracked. We blend industry insight with practical playbooks so your team can win today and keep winning.

If you want help selecting the best AI marketing automation tools, we’re here to help, warmly, directly, and with the savvy to get you live fast.

Explore our approach and book a friendly consultation with us here at BusySeed. You can also learn more about our services and process on our site’s core pages: Services and About.

FAQ: Practical answers for ethical, ROI-positive AI automation

Q1) What are the best AI tools for marketing that protect user privacy?

They should provide consent-aware orchestration, granular data minimization, transparent model documentation, and built-in AI content labeling. Look for native support for user rights requests and audit logs. Bonus points for explainability dashboards and model cards you can share with Legal. These capabilities let you confidently scale AI in marketing while demonstrating compliance.

Q2) Which are the top AI tools for social media marketing with privacy safeguards?

They include automated disclosure tags, approval workflows, robust brand-safety filters, and moderation assistants that seamlessly hand off to humans. They should sync preference and opt-out states across channels and support audit trails for every post and response. This is where the line between helpful and creepy is most visible, so choose carefully.

Q3) What are the best AI marketing automation tools that prioritize privacy?

They unify orchestration, analytics, and consent management. You should be able to tune personalization depth by audience, disable sensitive signals globally, and export proofs for auditors in minutes. When these tools integrate with social suites, your governance becomes a built-in advantage.

Q4) How should we set thresholds for model-driven personalization without risking trust?

Start conservatively: focus on first-party behaviors, exclude sensitive inferences, and cap frequency. Tie expansion to improvements in trust KPIs and conversion. If engagement rises and complaints fall, add sophistication; if not, pull back. This test-and-learn approach to AI in marketing keeps customers in control while you discover the limits of comfort.

Q5) Which metrics prove ethical AI automation is working, beyond ROAS?

Track consent-rate changes, preference center usage, “Why am I seeing this?” interactions, complaint rates, and qualitative feedback. We recommend a Trust-Adjusted ROAS that penalizes lift if complaints rise and rewards lift if consent improves.

A smarter path forward

Your customers want better experiences, but not at the price of privacy or control. When you approach AI in marketing with transparency, choice, and a strong governance backbone, you unlock the upside, more relevance, better service, and stronger loyalty, while keeping your reputation and legal risk in check.

If you’re ready to formalize your playbook, where to start, what to avoid, and how to measure impact, let’s talk.

Schedule a free consult to map your roadmap with us. We’ll meet you where you are, and get you where you want to go.

Works Cited

“AI Risk Management Framework.” National Institute of Standards and Technology, 2023, https://www.nist.gov/itl/ai-risk-management-framework.

“Endorsements, Influencers, and Reviews.” Federal Trade Commission, 2023, https://www.ftc.gov/business-guidance/advertising-marketing/endorsements-influencers-reviews.

“The Luring Test: AI and the Engineering of Deception.” Federal Trade Commission, 3 May 2023, https://www.ftc.gov/business-guidance/blog/2023/05/luring-test-ai-and-engineering-deception.

“European AI Act.” European Commission, 2024, https://digital-strategy.ec.europa.eu/en/policies/european-ai-act.

“How Americans View Data Privacy.” Pew Research Center, 18 Oct. 2023, https://www.pewresearch.org/internet/2023/10/18/how-americans-view-data-privacy/.

“Responsible AI in Marketing: Ethics and Trust.” Salesforce, 2024, https://www.salesforce.com/blog/responsible-artificial-intelligence-marketing-automation-ethics/.

“Targeted Ads Are Getting ‘Creepy,’ Consumers Say.” Forbes, 19 Mar. 2025, https://www.forbes.com/sites/cmo/2025/03/19/targeted-ads-are-getting-creepy-consumers-say/.

“Building a Trust-First Brand: Transparency and Consent in Marketing.” CMSWire, 2024, https://www.cmswire.com/digital-marketing/building-a-trust-first-brand-transparency-and-consent-in-marketing/.

“Consumer Privacy and Personalization 2025.” Qualtrics XM Institute, 2025, https://www.xminstitute.com/research/consumer-privacy-personalization-2025/.

“Marketers Say AI Tools Exceeded ROI Expectations in 2024.” MarTech Vibe, 2024, https://martechvibe.com/article/marketers-say-ai-tools-exceeded-roi-expectations-in-2024/.