Does Meta Penalize LLM-Generated Ads? How Human Creative Really Performs

Platforms can detect patterns, sameness, and synthetic creative signals. Human-led messaging consistently outperforms when it introduces novelty, emotional contrast, and brand voice. AI works best as an amplifier, not the origin.

Let’s cut through the noise. Meta does not punish you for using AI. What matters is whether your ads connect with people. In this guide, we unpack the latest research, what to test, and how to blend human craft with generative AI tools to scale performance, without losing your brand voice. We’ll weave in data, practical steps, and a clear plan for winning with AI ads across social media, including Facebook and Instagram, in an AI vs human context that keeps your strategy strong and steady.

TL;DR

- Meta optimizes for outcomes, not your tools. There’s no direct penalty for AI ads; performance signals rule delivery.

- Visuals that “feel synthetic” underperform; well-crafted AI visuals can match or beat human creative in tests.

- Human copy still leads in nuance, emotion, specificity, and brand voice, which drive quality clicks.

- The winning approach is a hybrid: use generative AI tools for volume and variety; keep humans in charge of story and standards.

- Segment aggressively and rotate creative. Younger audiences may respond better to AI variants; older cohorts often prefer traditional treatments.

Want a custom testing plan, asset audit, or hybrid workflow? Our growth team is here to help. No pressure, just smart, direct guidance.

Start a strategy session.

Does Meta penalize LLM-generated content on Facebook and Instagram?

No. There’s no public policy that demotes AI ads on Facebook or Instagram. Meta’s system is outcome-driven; click-through rate, conversion quality, and user feedback determine delivery. The platform continues to ship AI-first features, including creative automation and Advantage+, with hardly any signs of a penalty. Independent reporting shows that when creative feels authentic and useful, results hold steady or improve, regardless of whether you used generative AI tools (ppc.land).

Here’s the nuance: when visuals look overly synthetic, people engage less. In a large analysis cited by industry coverage, AI ads that didn’t “look AI” delivered competitive (even stronger) CTRs with no drop in conversions (ppc.land). In short, the perception penalty is about polish and believability, not provenance. For your ads on social media, the system rewards resonance. That’s why we urge a warm, human lens, even when your workflow uses generative AI tools.

- Lean into authenticity cues, faces, texture, candid angles. These help AI ads feel real and inviting (ppc.land).

- Keep human review in the loop. Most marketers still apply editorial standards before publishing, which protects brand voice and trust (Yahoo Tech).

Curious how this plays inside your vertical? We can set up an AI vs human test and tune your asset rotation. Explore our

services or check out our

case studies for more context.

What does the research actually say about AI vs. human creative performance?

Visuals generated with generative AI tools can perform on par with human-made assets. In aggregate testing highlighted by the industry, AI ads matched or beat human creative on CTR, provided the visuals didn’t telegraph “synthetic” (ppc.land). That’s a strong case for using AI ads to scale top-of-funnel concepts across your ads on social media, especially when resourcing is tight and timelines are short.

Copy is a different story. A controlled Google Ads test found human-written copy drove 60% more clicks and lower CPC than machine-generated text, according to

Search Engine Journal. The advantage came from nuance, emotion, specificity, and brand voice. If your audience needs reassurance and clarity before taking action, that’s where humans shine. In the AI vs human balance, treat visuals as AI-friendly terrain and treat core messaging as human-led terrain. You’ll scale faster and protect hard-earned trust.

- Use AI to quickly expand visual ideas; keep humans on headlines, primary text, and the CTA to lock in the brand voice.

- Expect gains at the top of the funnel; expect greater human value in the mid-to-lower funnel, where the stakes are higher.

How much do audiences and context matter?

A lot. One independent case study on Meta’s Advantage+ found that human creatives generally outperformed with 11% higher CTR and 12% lower CPC, but younger audiences (25–34) responded better to AI-optimized variants with a 58% higher CTR in that cohort (Kose Digital). In other words, creative preferences vary. To maximize ROI with AI ads, segment your ads on social media carefully and run an AI vs human comparison by age, funnel stage, and intent signals.

- Map channel-to-audience fit (Gen Z and younger Millennials may embrace novel formats; older cohorts may prefer familiar visuals and plainspoken copy).

- Match asset type to funnel depth, keep experimentation broad at the top; dial up proof and brand voice at the bottom.

- Validate with conversion quality, not just volume; big CTR is nice; blended CAC is decisive.

How does creative variety affect delivery and costs?

Meta rewards variety. Feeding the algorithm clusters of near-identical ads, common when teams mass-produce with generative AI tools, can lead to creative fatigue and delivery “starvation.” You’ll see CTR drift down and CPM creep up as sameness sets in (beefed.ai). The fix is straightforward: rotate formats, hooks, and aesthetics so your AI ads stay fresh across your ads on social media.

- Rotate formats: static image, short video, carousel, and UGC-style clips.

- Rotate hooks: surprise stat, myth-vs-fact, bold claim, quick story.

- Rotate visuals: new talent, environments, colorways, and props.

Done right, generative AI tools become a variety engine while your team’s judgment keeps everything aligned to your brand voice. This is the essence of a smart AI vs human partnership: machines accelerate, humans curate.

Why do novelty and emotion still outperform bulk production?

People stop for something fresh and relevant. Research in luxury advertising showed novelty and informativeness as leading drivers of purchase intent, insights that generalize well beyond luxury categories (ScienceDirect). Human editors excel at emotional lift, connecting moments to meaning, turning proof into pride, and keeping brand voice intact. Use generative AI tools to explore angles, then rely on your team to shape a message that feels unmistakably you. That’s how AI ads pull ahead of your ads on social media, even in crowded feeds.

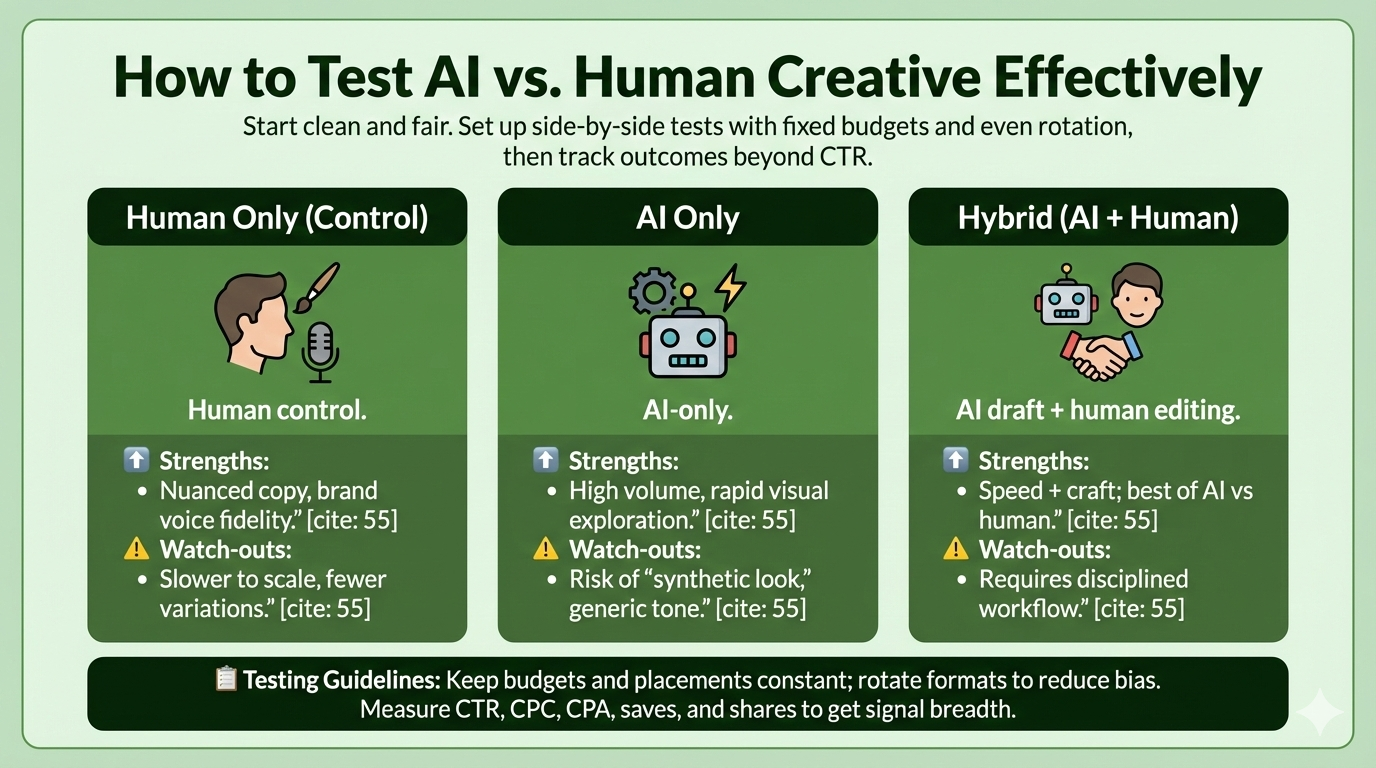

How should we test AI vs. human creative effectively?

Start clean and fair. Set up side-by-side tests with fixed budgets and even rotation, then track outcomes beyond CTR.

- Launch three variants in the same ad set: human control, AI-only, and hybrid (AI draft + human editing).

- Keep budgets and placements constant; rotate formats to reduce bias.

- Measure CTR, CPC, CPA, saves, and shares to get signal breadth.

| Variant | Strength | Watch-outs |

|---|---|---|

| Human Only | Nuanced copy, brand voice fidelity | Slower to scale, fewer variations |

| AI Only | High volume, rapid visual exploration | Risk of “synthetic look,” generic tone |

| Hybrid | Speed + craft; best of AI vs human | Requires disciplined workflow |

In our client work, hybrid wins most often on blended CAC because it marries the velocity of generative AI tools with the clarity and trust of human editing. If you want a second set of eyes on test design, we’re happy to help. Learn about our services or contact us to get started.

Does labeling AI-generated ads affect performance?

Labels change perception, and perception impacts engagement. Platforms are moving toward transparency on synthetic media, and regulators in some regions are formalizing disclosure requirements (Euronews). In tests cited by industry coverage, when viewers believed an image was synthetic, engagement dropped, even if the creative was otherwise strong (ppc.land).

- Use real people and real products when possible, even in AI-supported compositions.

- Pair AI visuals with clear, human-written copy that reflects your brand voice.

- Offer context on landing pages; clarity builds trust in AI ads on social media.

How can we keep AI outputs aligned with our brand voice?

Start with guardrails. Feed your system with approved assets, winning creative, messaging pillars, FAQs, and style guidance. Prompt with constraints (“sound like us by…,” “never claim…”), then route outputs through human QA. This blend ensures generative AI tools amplify your strengths without eroding the soul of your storytelling. It’s also the clearest way to stack the deck in an AI vs human test.

- Define a brand voice checklist (tone, clarity, claims, emotional stance).

- Maintain a do/don’t library; use read-back prompts to self-audit tone.

- Document approvals to keep your AI ads on social media consistent.

What hybrid workflow consistently wins?

Here’s a field-tested loop we’ve refined across industries. It’s warm, direct, and practical, designed to protect brand voice while you scale with generative AI tools:

- Train: Compile your winning ads, narratives, FAQs, and product truth. This is the heartbeat of your brand voice.

- Generate: Draft 20–40 variations per theme with generative AI tools, headlines, intros, CTAs, and visual mood boards.

- Humanize: Editors add persuasive frameworks (e.g., PAS, promise-proof-proposal), sharpen specificity, and ensure fit.

- Test: Run AI vs human vs hybrid variants in the same ad set, segmented by age and funnel.

- Learn: Feed winners back into prompts; keep evolving your library.

Most marketers now use AI daily, but 97% still review outputs manually, and 58% caution against over-reliance. That’s not fear, it’s wisdom. Use AI ads to boost speed; let people steer the message (Yahoo Tech).

How do we keep creative fresh without losing coherence?

Think in families, not clones. Build a shared spine, benefit, proof, tone, and explore diverse expressions around it. This keeps AI ads coherent and keeps your ads on social media lively.

- Weekly: Introduce 2–3 new angles across top performers.

- Biweekly: Retire bottom quartile; expand winners to new segments.

- Monthly: Refresh visual systems, color, props, talent, to avoid sameness (beefed.ai).

Where exactly does human copy outperform?

Three places stand out in the AI vs human trade-off, and each links back to brand voice:

- Emotional lift: Humans shape references and contrasts that spark feeling. Tests show higher click volume and lower CPC for human-crafted copy (Search Engine Journal).

- Specificity under pressure: When every character counts, precision wins. Humans sense the difference between hype and honest clarity.

- Voice fidelity: AI can imitate, but humans keep your brand voice unmistakable and memorable.

Use generative AI tools to draft multiple headline directions; let writers refine a tight shortlist. Across ads on social media, this hybrid structure leads to reliable gains for AI ads without sacrificing trust.

Which platforms and tools should we choose?

Let goals define your stack. If you need scale, lean on generative AI tools with strong personalization and the ability to generate variants. For governance, pick solutions that support reusable style guides, QA checkpoints, and legal approvals; your brand voice depends on it. For visuals, choose tools that handle compositing and skin tones gracefully, then mix in stock or UGC to avoid the uncanny valley. Document your rules so your AI vs human testing stays compliant and consistent across your ads on social media.

Best strategies to humanize AI-generated ads

- Use realistic faces and candid angles; invite connection, not perfection.

- Ground products in real-world scenes, believable shadows, reflections, and context.

- Align every headline and CTA to your brand voice; rephrase until it sounds like you.

- Lead with proof over hype, quantify benefits, and show receipts.

- Add one twist per asset (stat or visual flourish); keep novelty focused.

These moves keep AI ads out of the uncanny valley and firmly in the performance lane. If you want a quick gut-check before launch, ask, “Does this sound like our brand voice?” If not, you’re one edit away.

Quick reference: signals that move Meta delivery

- Audience feedback: hidden negatives and positive interactions affect delivery.

- Asset distinctiveness: variety curbs fatigue and keeps CPM in check (beefed.ai).

- Perceived authenticity: believable visuals and confident, clear copy win more auctions (ppc.land).

FAQ: Long-tail answers for marketers building a hybrid engine

Q1) How do I run compliant AI ads on Meta as labeling rules evolve?

Build disclosure into templates and enable it via a toggle. Platforms are increasingly labeling synthetic media, and some regions are formalizing requirements for AI-generated ads, Euronews reports. Keep your docs updated, route all claims through review, and maintain a single source of truth for your brand voice. This lets you adapt quickly without rewriting every asset. It also keeps your AI ads trustworthy across your ads on social media.

Q2) Will labeled AI visuals hurt ad results—and what are the best tools for maintaining brand voice in advertising?

Meta does not penalize you for using AI. However, perception matters. When viewers recognize a visual as synthetic, engagement can dip, even if the creative is solid (ppc.land). This is where the best tools for maintaining brand voice in advertising matter most. Pair AI visuals with human-edited copy, transparent proof, and brand-approved language frameworks. The combination keeps AI ads feeling authentic and consistent inside an AI vs human test, especially across ads on social media.

Q3) What’s the fastest way to structure a fair AI vs human creative test using top-rated AI platforms for ad personalization?

Split your budget evenly across three variants (human-only, AI-only, hybrid) in the same ad set. Normalize placements and rotate formats. Many top-rated AI platforms for ad personalization can accelerate variant creation, but fairness comes from controlling variables. Track through CPA and secondary actions (saves, shares), not just CTR. Most advertisers see a hybrid win in blended CAC, as AI delivers speed while humans preserve brand intent and judgment.

Q4) How often should I rotate creative to avoid fatigue with AI ads?

Run weekly micro-iterations and monthly thematic refreshes. Platforms favor distinct assets; sameness invites starvation and rising CPMs (beefed.ai). Use generative AI tools to propose batches, then have editors select the few that amplify your brand voice. This cadence keeps your ads on social media lively without losing coherence in an AI vs human environment.

Q5) Why do younger audiences sometimes respond better to AI-optimized creative?

In at least one Advantage+ case study, the 25–34 segment showed a stronger response to AI-optimized variants, while older cohorts preferred traditional creative (Kose Digital). Younger users often engage with novel formats and faster iteration cycles, areas where AI ads excel. The takeaway: segment your ads on social media and run an AI vs human comparison by age. Keep brand voice consistent while tailoring your creative approach to each cohort.

The bottom line: Scale with AI. Win with humans. Grow with intent.

Meta isn’t penalizing your tools; it’s tuning to your results. When you use generative AI tools for speed and exploration, and reserve human creativity for story, proof, and brand voice, you get the best of both worlds. AI ads can match or beat human visuals when they feel authentic. Human writers still deliver the emotional clarity that converts. The smartest teams segment audiences, rotate assets to beat fatigue, and run disciplined AI vs human tests across their ads on social media.

If you’re ready to build that hybrid engine, we’re here for you. BusySeed blends strategy, creative craft, and platform know-how, always warm, direct, and on your level.

Book a strategy session, explore our

services, or learn more

about us. Let’s make your next quarter your best yet.

Works Cited

- Beefed.ai. “Detect and Fix Creative Fatigue in Paid Social.” Beefed.ai, https://beefed.ai/en/detect-fix-creative-fatigue-paid-social. Accessed 4 Feb. 2026.

- Euronews. “South Korea Says Advertisers Must Label AI-Generated Ads.” Euronews Next, 10 Dec. 2025, https://www.euronews.com/next/2025/12/10/south-korea-says-advertisers-must-label-ai-generated-ads. Accessed 4 Feb. 2026.

- Kose Digital. “Unlocking Meta Advantage+: What Our Tests Revealed.” Kose Digital, https://www.kosedigital.com/unlocking_meta_advantage/. Accessed 4 Feb. 2026.

- ppc.land. “AI Ads Perform as Well as Humans—If They Don’t Look AI-Made.” ppc.land, https://ppc.land/ai-ads-perform-as-well-as-humans-if-they-dont-look-ai-made/. Accessed 4 Feb. 2026.

- ScienceDirect. “AI in Luxury Brand Communications: How Quality Dimensions Impact Advertising Effectiveness.” Journal of Retailing and Consumer Services, Elsevier, https://www.sciencedirect.com/science/article/pii/S0969698925001821. Accessed 4 Feb. 2026.

- Search Engine Journal. “Humans vs. Machines: Ad Copy Content Test Data Study.” Search Engine Journal, https://www.searchenginejournal.com/humans-vs-machines-ad-copy-content-test-data-study/509942/. Accessed 4 Feb. 2026.

- Yahoo Tech. “How AI Is Changing UK Marketing Creativity (and Why Humans Still Matter).” Yahoo News, https://tech.yahoo.com/ai/articles/ai-changing-uk-marketing-creativity-142215633.html. Accessed 4 Feb. 2026.